THE COMPUTER VISION PLATFORM TO ENHANCE QUALITY STANDARDS AT SCALE

Unleash the power of Computer Vision, a powerful Visual AI technology, to automate quality control during your field operations. Enhance cost-efficiency and productivity by empowering your field workers to get the job done right the first time, streamlining your business processes.

EFFICIENCY IN THE FIELD & THE OFFICE

Empower field workers to get the job done right the first time

- Ensure your workers provide complete and accurate photo reporting

- Provide real-time feedback on the conformity of their job to reduce errors

Empower your organization with unprecedented field data and automate business processes

- Access operational performance data, for 360 visibility on the field

- Automate the payment of your contractors based on the AI validation of their work

- Monitor your infrastructure automatically, operation after operation

OUR COMPUTER VISION PLATFORM COMPONENTS

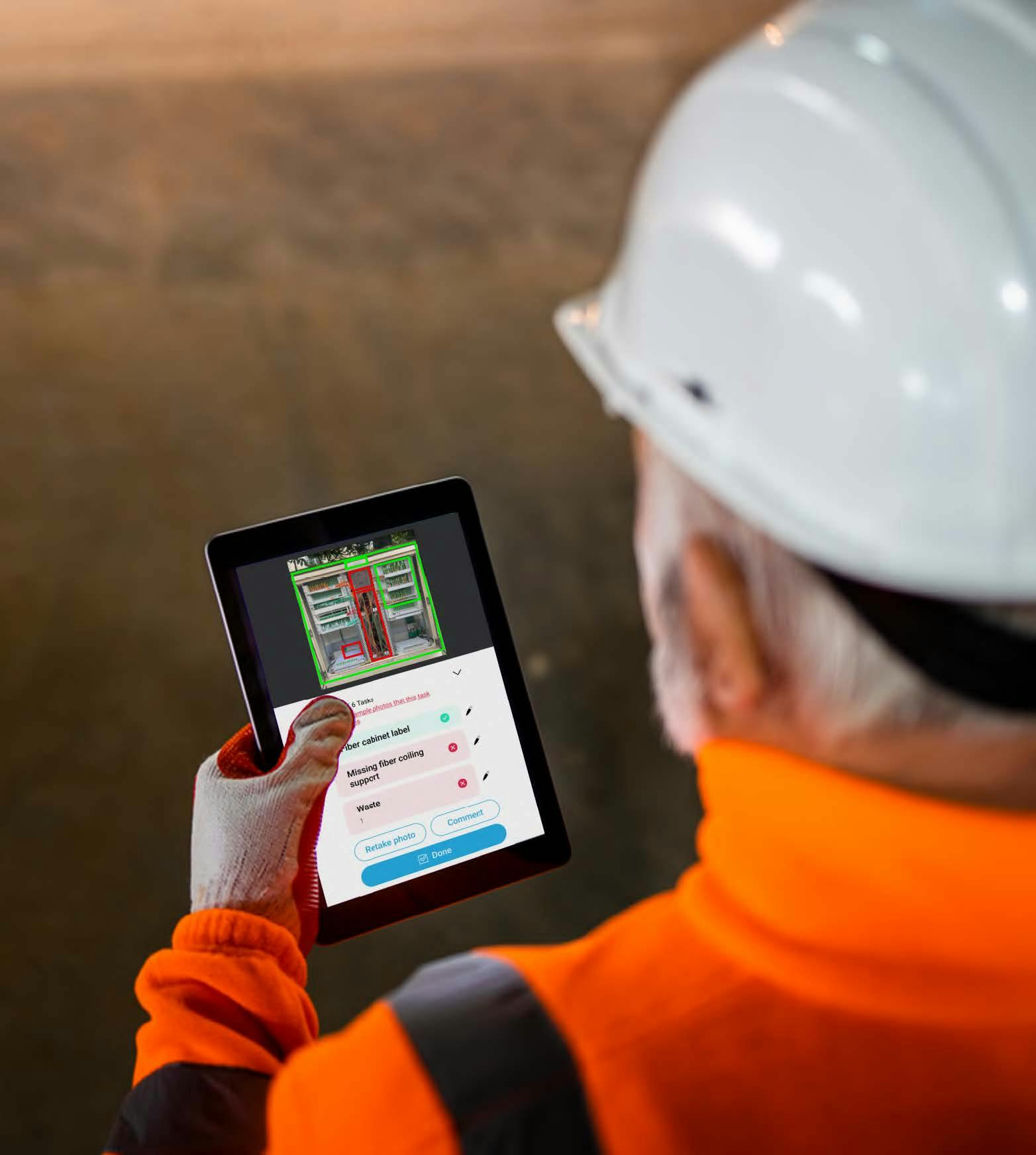

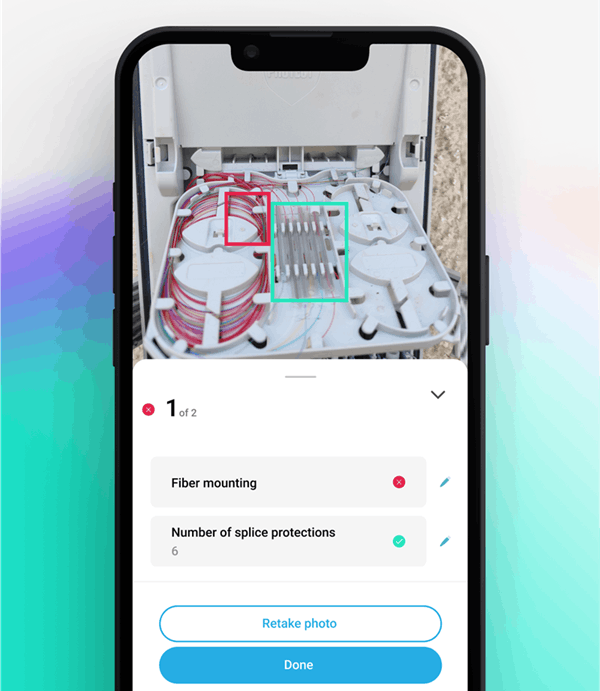

The Ultimate Field Worker - AI interface

Deepomatic’s mobile interface provides the best quality control experience

An intuitive user experience

Our app allows field workers to capture actionable photos and provides clear feedback on quality control results. This ensures that workers understand the AI analysis and can correct any potential errors or oversights in their work

Facilitated integration

Effortlessly integrate our automated quality control solution with your existing field tools using our Connectors. This eliminates the need for in-house user experience development and accelerates your time to value.

30 000+

field workers use Deepomatic

20M

field operations analyzed every year

<2 sec

to analyze a photo

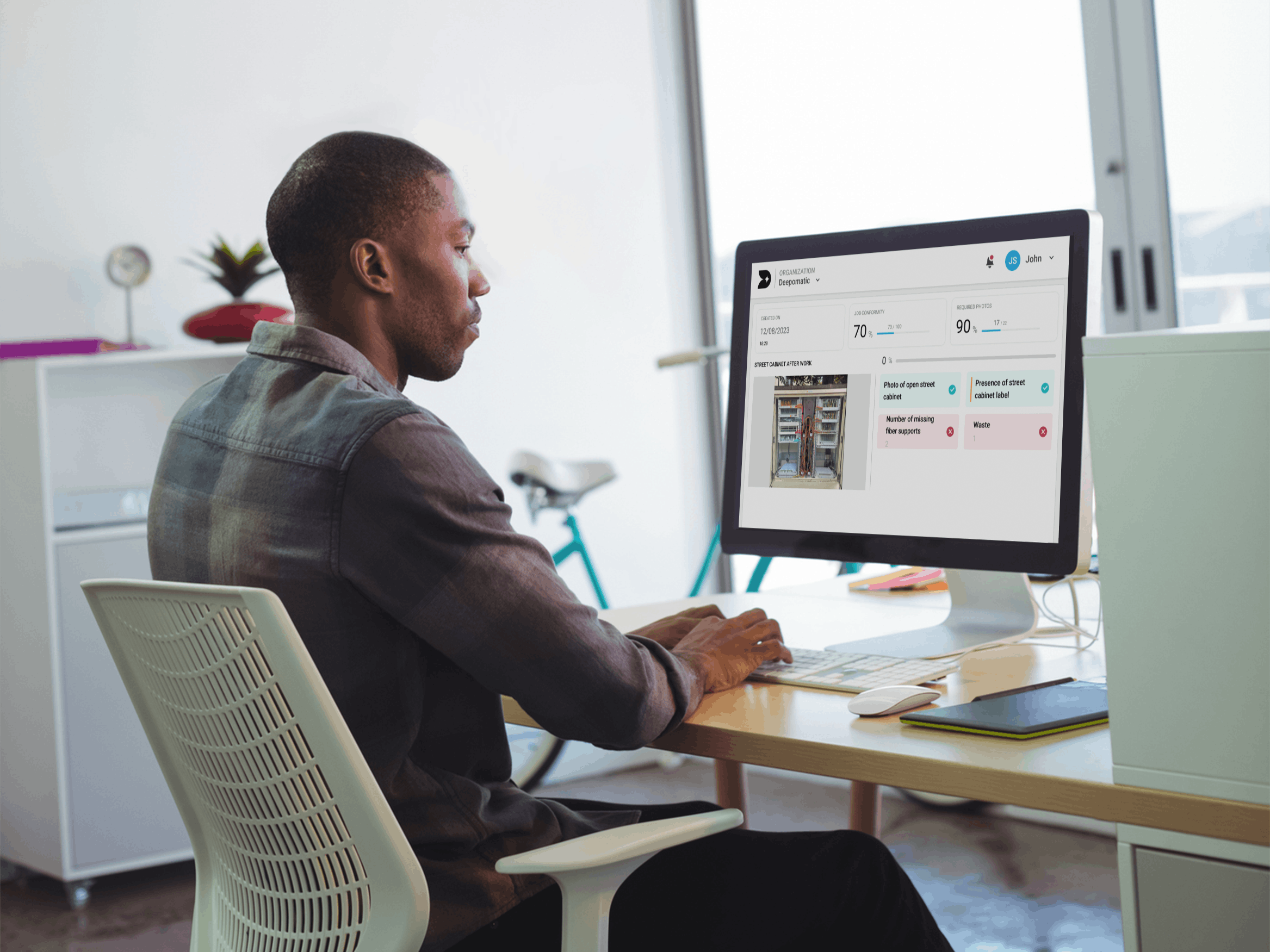

360-Visibility On The Field With Our Dashboards

Leverage AI analysis and generated field data to boost efficiency and automate your business processes.

Automate back office processes to enhance efficiency

Say goodbye to long and costly manual quality control.

Leverage AI to validate photos and field jobs, enabling automation for:

- Asset inventories

- Contractor payments

- Client billing

Access high-level indicators to follow how your teams and contractors perform

Use our Insight dashboard to monitor the overall quality of your operations, identify potential bottlenecks and implement corrective measures.

Swiftly integrate our field performance data into your own BI dashboard.

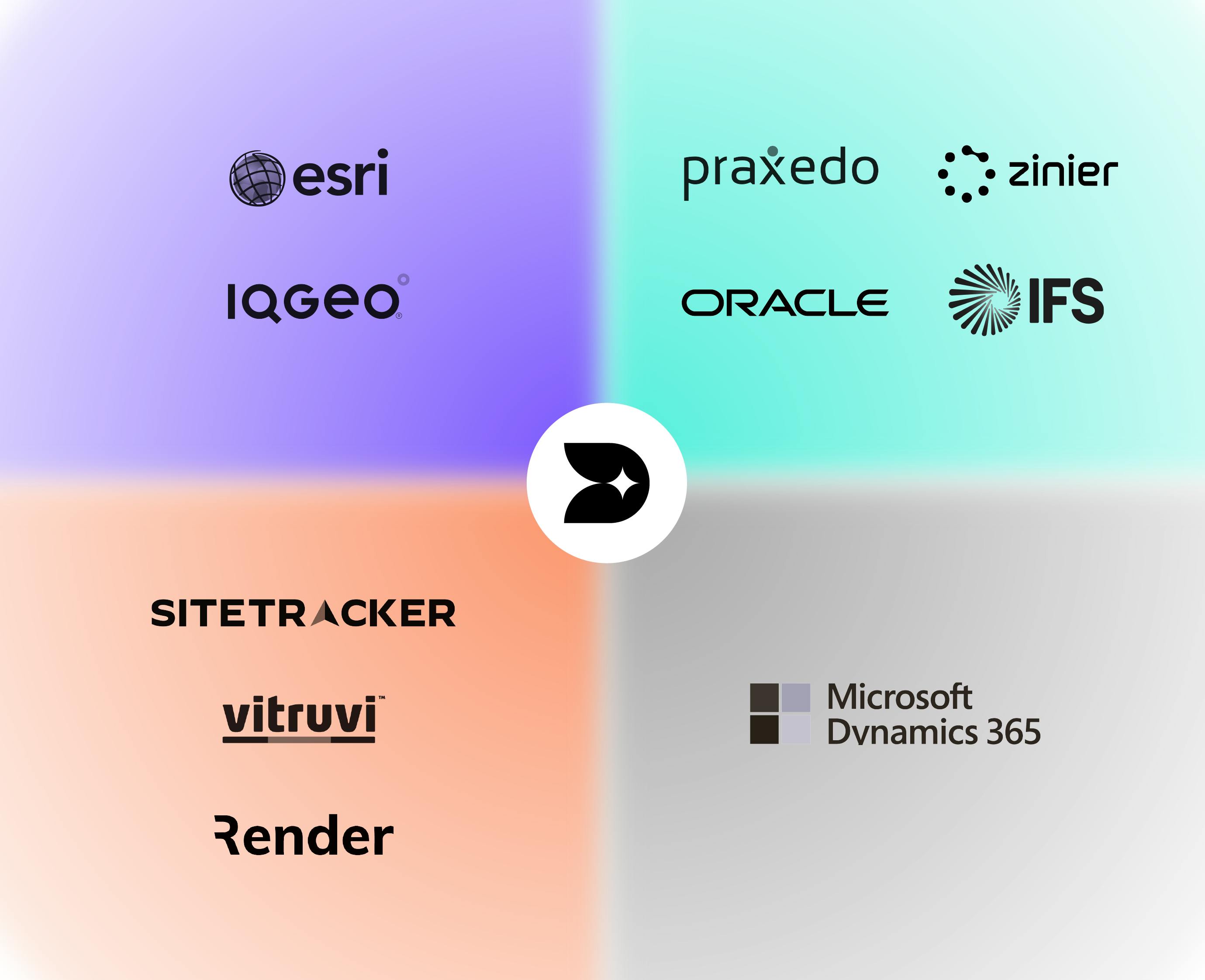

INTEGRATING OUR COMPUTER VISION PLATFORM

We Seamlessly Integrate With Your Existing Tech Ecosystem

Deepomatic partners with a growing ecosystem of FSM and GIS software providers, leveraging off-the-shelf connectors.

This collaboration offers our clients integrated solutions that boost field worker productivity.

Our solution can also be deployed in other field tools that you may be using, thanks to our connectors or through a complete API integration.

STATE OF THE ART COMPUTER VISION PLATFORM

3 MODELS TAILORED TO YOUR NEEDS

Starter

Best suited for organisations

- With a low volume of jobs

- Where off-the-shelf Al checks are sufficient

- When no integration is required

Improves first-time-right by implementing standard automation.

Business

Best suited for organisations

- With a moderate volume of jobs (tens of thousands to hundreds of thousands)

- Where custom Al checks are needed

- When simple integration into existing mobile applications is required

Ensures field workers systematically do the job right the first time.

Enterprise

Best suited for organisations

- With a high volume of jobs (hundreds of thousands to millions)

- Where high customization in Al, integrations, and connectors is required

- When simple integration into existing mobile applications is required

Ensures first-time-right across all operations and automates advanced business processes